Despite the fact that Apple has been actively drowning for augmented reality for quite some time, the company somehow did not make any special progress in its development, except for the function of eye correction in FaceTime and equipment iPad Pro 2020 ToF sensor. But who really had a hand in the development of this technology is Google. The search giant managed to direct augmented reality not only to entertainment like 3D animals, but also to derive practical benefit from it, providing AR-mode to branded maps, whose functionality has recently become even wider.

Google Maps learned to determine location using camera and AR

Google Maps for Android in the latest update, which was released this week, got a calibration function using augmented reality mode. It allows you to use the smartphone camera lens to more accurately determine the user's location.

How to calibrate Google Maps

Now you don't need GPS to determine your location in Google Maps

It is enough to take the device out of your pocket, launch Google Maps, launch the AR-calibration mode and scan the surrounding area. The maps themselves, based on the received data, will determine the visible objects and establish the current terrain.

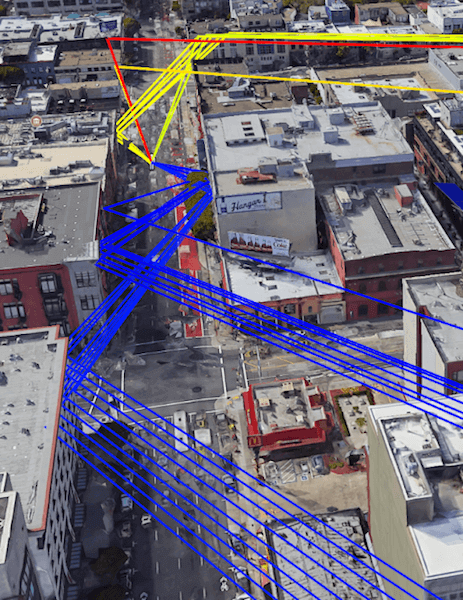

The calibration function is based on Street View and a neural network that Google has trained to identify buildings, landmarks and other objects. As a result, the algorithms compare what they see with a database of addresses and determine the user's current location almost accurately.

The augmented reality calibration function should be used in situations where the use of GPS is for some reason impossible. This happens, for example, in a dense urban development, which is poorly penetrated by the signal.

What Google Maps and Apple Maps have in common

Apple Maps also learned to determine the location using the camera

Recently, a similar feature, by the way, appeared in Apple Maps. Apple trained its maps to determine the current location using the camera built into the smartphone. True, augmented reality is not involved there, but the Look Around mode is used, which is an analogue of Google's Street View and pre-prepared routes that are formed by partners Apple.

As the Google Maps update is rolling out gradually, it's hard to tell if the new calibration mode works outside of the US. Anyway, Apple Maps only allow photo calibration in some American cities: San Francisco, New York, Chicago, Washington DC, Seattle and Las Vegas.

This limitation is due to the fact that Look Around is only available there, and Street View in Google Maps is available worldwide. Therefore, there is every reason to believe that it will be possible to calibrate Google maps using an AR camera and a camera in the United States, as well as in Russia, and the CIS countries.

AR mode in Google Maps

Neural networks and an extensive database allowed Google Maps to work more efficiently with augmented reality

Frankly speaking, I am very impressed by Google's approach to the development of its services and Google Maps in particular. Despite the fact that AR mode, which appeared in the maps of the search giant last year, cannot work longer than a few minutes, since it loads both the processor and the battery, the very fact of its presence already speaks of the company's outstanding results in the development of augmented reality.

Neither Apple, nor Yandex, nor any other company have been able to achieve the same result so far, although they tried. Unlike Google Maps, all other maps either offer virtual directions for driving directions in AR, or they don't offer anything. Therefore, if Google can defeat excessive energy consumption, it will be a real breakthrough.